Hi - if you’re reading this, nice to meet you (or hope to meet you) at NAMM and thanks for stopping by.

My day job has been as an AI research/product lead (e.g. prev building voice AI models at Microsoft). But I’m also a life-long audiophile which is what brings me to NAMM this year for the first time!

I’m here to learn about music tech, and whether/how AI is finding valuable use today.

The great benefit of computer sequencers is that they remove the issue of skill, and replace it with the issue of judgement. - Brian Eno

While this is playing out in pre-recorded composition/production today, I’m particularly interested in the gap between production and performance live, whether stages or streams. Lesser known, but AI can also be used real-time.

If any of this sounds interesting, whether you have the artist/creator or the engineer/tinkerer’s perspective do reach out - I’d love to talk.

You can find me on LinkedIn, or drop me a line at akashmjn.1@gmail.com.

p.s. Shoutout to my friend/instigator Manaswi (at MIT Media Lab) who got me into this niche community.

NAMM Bonus

Audiophile Evidence

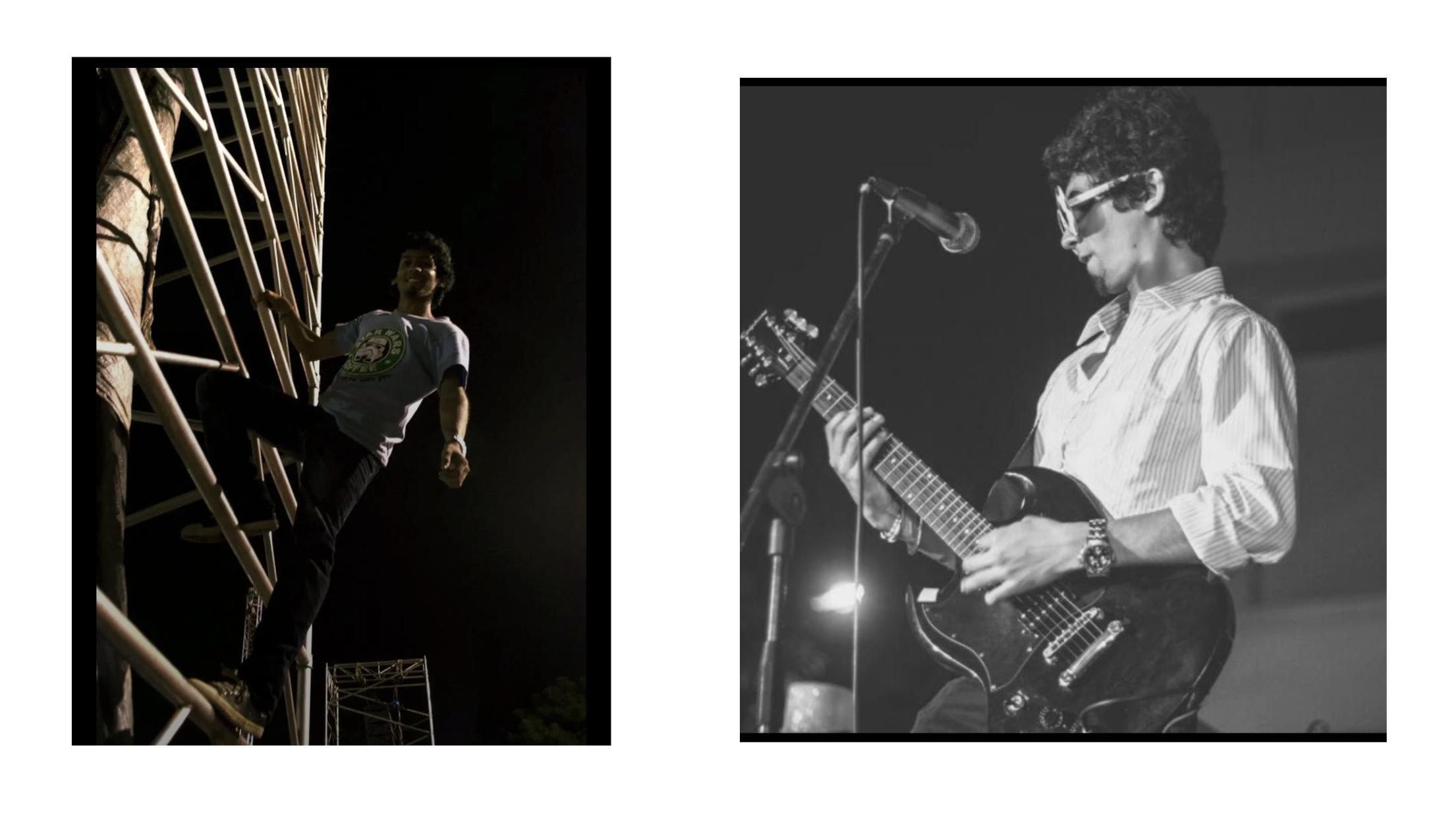

- (left) Elated (on a stage truss) when I first learnt about line arrays, after organizing a sold-out EDM gig in college

- (right) Wannabe John Mayer :)